A Real-Time and Low Calculation Cost Lane Recognition and Position Estimation Algorithm

Automobile is heading toward a new direction with the introduction of many driving assist features in cars and testing of self-driving cars in the last few years by car manufacture, technology, and ridesharing companies. This smart feature can help to reduce the possibility of car accident because of human error, to increase comfort, and increase the capacity of the road when many cars can collaborate with each other automatically.

In some of the driving assist features, such as lane departure warning, lane keeping, and self-driving, the information about the car’s position relative to the road is required. Position of a car relative to the road lane is one of the most crucial information for a self-driving car to navigate itself in an urban road environment. To be practical, the position estimation must be obtained in real-time and with high accuracy. Such algorithm to be implemented in cars also should not use to much energy to keep the car’s fuel consumption low. In such battery-operated system, the computational power is generally limited; hence a low calculation cost algorithm is preferred.

Researches on the vision-based lane recognition system have been carried out with various approaches. Most of them are using Hough transform to detect lines in the image [1] [2] [3] [4] [5], however to recognize the lane various methods such as Fuzzy reasoning [6], Gabor filter [7] [8], geometrical model [1] [9], and color features [2] [10] are used. In the process some use the perspective mapping to remove skew caused by the projection of road plane coordinate to the camera coordinate. Some use the warp perspective mapping [7] [4] to estimate the bird’s view of the lane while other use inverse perspective mapping [8]. Many of these approaches specifically handle curved lane [7] [1] [2] [4], while others only handle straight lane. Many of the approaches however required relatively high calculation cost which is difficult to be implemented in car with limited resource of computational power.

Many of the approaches however required relatively high calculation cost which is difficult to be implemented in power-limited vehicles. A real-time and low-cost vehicle position estimation algorithm that can recognize road lane and estimate vehicle’s position relative to the road lane is necessary. This article introduces one the approach that use the probabilistic Hough transform [11] integrated in the OpenCV library to speed up line detection. Instead of using the warp perspective mapping, the proposed approach uses the inverse perspective mapping [8] to deskew the lines which is faster. However, instead of mapping the whole image the proposed approach performs line detection and only map the line segments, instead of the image, to speed up calculation. To improve lane recognition accuracy, it also uses a priory known color information of the lane markings. The algorithm however only recognizes straight lane, assuming that the turning radius is large enough such that the lane in front of the vehicle can be approximated as straight lane up to an adequate distance proportional to the speed of the vehicle.

Furthermore, the algorithm also estimates the lateral position of the vehicle with respect to the center of the lane, and the orientation of the vehicle with respect to the lane direction. The algorithm is tested on 1:10 miniature car with a small arm-based processing board, where the accuracy of the lane recognition, the lateral vehicle position estimation, and the orientation estimations are evaluated on a miniature road lane.

The Lane Marking Detection

Assuming that the camera’s optical axis is parallel to the flat road lane, the vanishing point of the lane will be at the center row of the captured image. Therefore, the information above the center row of the image can be neglected, and further image processing only applies to the lower half of the image to improve calculation speed.

Lane markings are generally designed to have drastic change in brightness at the edges. It is obvious that we can convert the color image into grayscale and use edge detection operator to obtain the edge information in a much smaller binary edge image.

Since the lane is assumed straight at up to an adequate distance, then most part of the lane markings will show up as straight lines. Lane markings at further distance from the camera will be projected to smaller number of pixels in the image. Hence Hough transform of the edge image will mainly detect lines related to lane markings near to the camera.

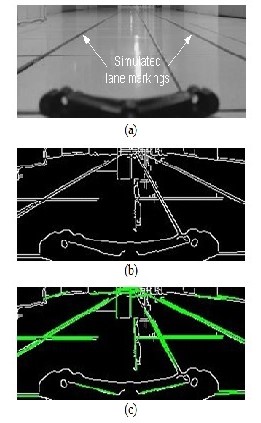

Fig. 1 shows an example of the implemented lane markings detection process, where the lower half of the color image from the camera is converted into grayscale image, and then the Canny edge detection operator is applied to mark edges in the image, and finally the progressive probabilistic Hough transform detect line segments from the edge binary image shown as bold green lines in the Figure. Note that the dark structure at the middle bottom of the image is part of the miniature vehicle protruding into the view of the camera.

Fig. 1. Example of the line detection process, where (a) is the grayscale image, (b) is the edge binary image, and (c) shows the detected lines in bold green.

The Inverse Perspective Mapping

The current algorithm is optimized to detect solid lane markings. The focus is on searching for long solid lines in the image, omitting short line features with discontinuity. This can eliminate false line detection of irregular shaped structures in the image; however, depending on the environment there can be many long straight features other than the lane markings in the image, as in Fig. 1(c). These noises along with the perspective in the image complicate the lane markings recognition in the image plane.

To correct the perspective view of the scene, perspective mapping methods such as the warp perspective mapping (WPM) and the inverse perspective mapping (IPM) can be used. These mappings yield bird’s view estimate of the scene where lane markings are parallel to each other. These methods usually perform mapping of the whole image as in [7] [8]. The mapping of the image can be reduced into the mapping of the line segments using IPM to minimize calculation cost. This only involve mapping of the starting and ending coordinate of each line segment.

The resulting line segments related to the lane markings in road plane coordinate will be parallel to each other. This simplifies the lane recognition since the other interfering lines less likely to have the same property. Even if we found multiple pairs of parallel lines, we can further search for pair of parallel lines with distance equal to the usually known distance of the actual pair of lane markings.

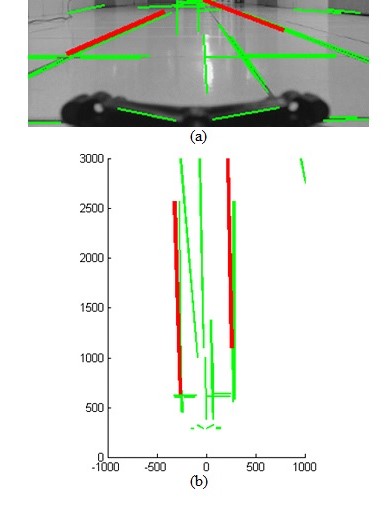

Example of the inverse perspective mapping process is depicted in Fig. 2, where line segments detected by the Hough transforms are mapped into the road plane coordinate system. Although there are multiple pairs of near parallel lines, by matching the distance between near parallel line segments we can choose a pair of line segments (in red) that lie at the expected position of the simulated lane markings. Note that there are multiple pairs of line segments that meet the criteria, but longer line segments have larger priority to be chosen.

Fig. 2. Example of the inverse perspective mapping process, where (a) shows the detected line segments and (b) is the resulting mapped line segments in road plane coordinate (in mm). The red lines are a possible pair of lane markings.

The Color Matching

Furthermore, to improve the recognition accuracy by reducing false positive recognition, color requirement to qualify a pair of line segments as the recognized lane markings is added. The color matching process search the pixels in the original color image for a known color feature of the lane markings. This color feature of the lane markings is in HSI (hue-saturation-intensity) color space can be obtained in advance from the actual lane markings as in [2]. The HSI color space divides the color into hue and saturation values. The color information is independent from the illumination intensity of the image. Therefore, we can define some ranges of hue, saturation, and intensity values that fit the known lane markings in various lighting condition.

The Performance of the Algorithm

The algorithm is implemented in the Beaglebone board which uses the 720MHz ARM Cortex-8 processor running Linux operating system. Many of the basic image processing functions such as image conversion, Canny edge detection, and progressive probabilistic Hough transform are using the OpenCV library. The camera is the Logitech C920 webcam with a view angle of 78° and the image resolution is set to 320 × 240 pixels. The height of the camera is about 105 mm from the floor. The simulated lane markings used two dark blue adhesive tapes separated at a distance of 48 Cm apart. The tapes are 1 Cm wide and maximum separation distance error is ±5 mm throughout the length of the simulated lane.

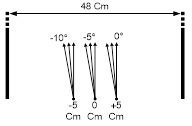

To measure the accuracy of the orientation angle estimation and the lateral error, we put the camera at three different positions with lateral errors: −5 Cm, 0 Cm, and +5 Cm. At each position we evaluate three different orientation error angles of −10°, −5°, and 0°. The camera is positioned manually by hand; therefore, we assumed a maximum position error of ±1 mm, and a maximum angle error of ±1° in placement. At each defined position and angle, we performed nine trials to recognize the lane and to estimate the lateral and orientation error. The arrangement of the simulated lane and the placement of the camera for evaluation are shown in Fig. 3.

Fig. 3. Placement of the camera for accuracy evaluation.

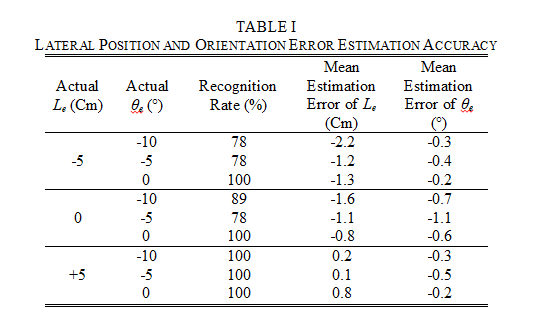

The recognition rate column in Table 1 lists the percentage of successful lane recognitions, each with nine trials. The recognition rate tends to get smaller as the orientation error gets larger. The problem is worse when the lateral error shifts to the same direction as the orientation error. This is because the camera captures less portion of the lane marking at one side as it directs its optical axis to the other side. One example of this condition is shown in Fig. 7 when the lateral error is −5 Cm and the orientation error is −10°. Although line segments are detected at the positions of the right lane markings in Fig. 7(a), however it is difficult to detect the color feature because of the distance Fig. 7(b). Camera with wider view angle will enable lane recognition at larger orientation angle values.

Table 1 also list the mean estimation error of the lateral error Le. This value is the difference between the mean of the estimated lateral error values and the actual lateral error value. Larger estimation error is observed when the camera’s orientation is heading to the same direction as the lateral error shift. This problem is worst in the sample case in Fig. 7(a). Even when the algorithm manages to detect the right lane marking, the position of the right line segment is less accurate as the distance of the from the camera increased. At the other hand, the estimation improves when camera has a close view of the lane markings.

Accuracy of orientation error θe estimation listed in Table 1 is very high, where the estimation error is less than 1 degree in almost all cases.

Evaluation of the calculation speed showed that Beaglebone board can execute the algorithm to calculate 4.2 estimations of the lateral error and orientation error in a second. This calculation rate is adequate in most case for lateral control of the vehicle in normal forward speed.

The most time-consuming calculation in the algorithm is the Hough transformation, which consumes aproximately 80% of the calculation time. The authors later found a similar implementation of lane recognition system in [5] using Hough transform and Blackfin 561 DSP processor resulted in a much higher recognition rate. This means that it is possible to significantly improve the calculation speed using the same algorithm with different hardware implementation.

This article is contributed by Sofyan, S.Kom., M.Eng., lecturer in BINUS ASO School of Engineering.

References

| [1] | Shengyan Zhou et al., "A novel lane detection based on geometrical model and Gabor filter," in IEEE Intelligent Vehicle Symposium, San Diego, CA, 2010, pp. 59 - 64. |

| [2] | Ping Kuang, Qinxing Zhu, and Xudong Chen, "A Road Lane Recognition Algorithm Based on Color Features in AGV Vision Systems," in International Conference on Communications, Circuits and Systems, Guilin, 2006, pp. 475 - 479 (Vol. 1). |

| [3] | Shih-Shinh Huang, Chung-Jen Chen, Pei-Yung Hsiao, and Li-Chen Fu, "On-board vision system for lane recognition and front-vehicle detection to enhance driver's awareness," in IEEE International Conference on Robotics and Automation, Taipei, May 2004, pp. 2456 - 2461 Vol.3. |

| [4] | Fangfang Xu, Bo Wang, Zhiqiang Zhou, and Zhihui Zheng, "Real-time lane detection for intelligent vehicles based on monocular vision," in 31st Chinese Control Conference, Hefei, 2012, pp. 7332 - 7337. |

| [5] | Stephen P. Tseng, Yung-Sheng Liao, and Chih-Hsie Yeh, "A DSP-based Lane Recognition Method for the Lane Departure Warning System of Smart Vehicles," in Conference on Networking, Sensing and Control, Okayama, Japan, 2009, pp. 823-828. |

| [6] | Ping Kuang, Qingxin Zhu, and Guochan Liu, "Real-time road lane recognition using fuzzy reasoning for AGV vision system," in International Conference on Communications, Circuits and Systems, June, 2004, pp. 989-993 Vol.2. |

| [7] | F. Coşkun, O. Tunçer, M.E. Karsligil, and L. Güvenç, "Real time lane detection and tracking system evaluated in a hardware-in-the-loop simulator," in International IEEE Conference on Intelligent Transportation Systems, Funchal, 2010, pp. 1336 - 1343. |

| [8] | A.M. Muad, A. Hussain, S.A. Samad, M.M. Mustaffa, and B.Y. Majlis, "Implementation of Inverse Perspective Mapping Algorithm for the Development of an Automatic Lane Tracking System," in IEEE Region 10 Conference TENCON 2004, 2004, pp. 207 - 210 (Vol. 1 ). |

| [9] | A. AM. Assidiq, O. O. Khalifa, Md. Rafiqul Islam, and Sheroz Khan, "Real Time Lane Detection for Autonomous Vehicles," in International Conference on Computer and Communication Engineering, Kuala Lumpur, 2008, pp. 82-88. |

| [10] | M. Caner Kurtul, "Road Lane and Traffic Sign Detection & Tracking for Autonomous Urban Driving," Bogazici University, Master Thesis 2010. |

| [11] | J. Matas, C. Galambos, and J.V. Kittler, "Robust Detection of Lines Using the Progressive Probabilistic Hough Transform," Computer Vision and Image Understanding, vol. 78, no. 1, pp. 119-137, April 2000. |

Comments :